# Introduction to the Administration API

Source: https://docs.corti.ai/about/admin-api

Programmatic access to manage your Corti API Console account

## What is the Admin API?

The `Admin API` lets you manage your Corti API Console programmatically. It is built for administrators who want to automate account operations.

This `Admin API` is separate from the `Corti API` used for speech to text, text generation, and agentic workflows:

* Authentication and scope for the `Admin API` uses email-and-password to obtain a bearer token via `/auth/token`. This token is only used for API administration.

* The `Admin API` endpoints `/customers` and `/users` are only enabled and exposed for projects with Embedded Assistant.

Please [contact us](https://help.corti.app) if you have interest in this functionality or further questions.

### Use Cases

The following functionality is currently supported by the `Admin API`:

| Feature | Functionality | Scope |

| :------------------- | :----------------------------------------------------------------------- | :------------------------------- |

| **Authentication** | Authenticate user and get access token | All projects |

| **Manage Customers** | Create, update, list, and delete customer accounts within your project | Projects with Embedded Assistant |

| **Manage Users** | Create, update, list, and delete users associated with customer accounts | Projects with Embedded Assistant |

Permissions mirror the Corti API Console - only project admins or owners can create, update, or delete resources.

## Quickstart

* Sign up or log in at [console.corti.app](https://corti-api-console.web.app/)

* Ensure your account has a password set

Best practice: use a dedicated service account for Admin API automation. Assign only the minimal required role and rotate credentials regularly.

Call `/auth/token` with your Console email and password to obtain a JWT access token.

See API Reference: [Authenticate user and get access token](/api-reference/admin/auth/authenticate-user-and-get-access-token)

```bash theme={null}

curl -X POST https://api.console.corti.app/functions/v1/public/auth/token \

-H "Content-Type: application/json" \

-d '{

"email": "your-email@example.com",

"password": "your-password"

}'

```

Example response:

```json theme={null}

{

"accessToken": "eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...",

"tokenType": "bearer",

"expiresIn": 3600

}

```

Include the token in the Authorization header for subsequent requests:

```bash theme={null}

curl -X GET https://api.console.corti.app/functions/v1/public/projects/{projectId}/customers \

-H "Authorization: Bearer eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9..."

```

Tokens expire after `expiresIn` seconds. Once expired, call the `auth/token` endpoint again to obtain a new token.

***

## Top Pages

Obtain an access token

Create a new customer in a project

Create a new user within a customer

Please [contact us](https://help.corti.app) for support or more information

# Compliance & Trust

Source: https://docs.corti.ai/about/compliance

# Errors

Source: https://docs.corti.ai/about/errors

Collection of known errors and solutions

[docs-get-access]: https://docs.corti.ai/get_started/getaccess

[docs-quickstart]: https://docs.corti.ai/get_started/quickstart

[docs-langs]: https://docs.corti.ai/about/languages

[support]: https://help.corti.app

[support-email]: help@corti.ai

## Access forbidden (`403`)

Code: `A0001`

You don't have the necessary permissions to access this feature or

information. Please check [Get access][docs-get-access] and

[Authentication][docs-quickstart].

## Timeout (`504`)

Code: `A0002`

The allowed time for this request has passed. Please try again with smaller

request size or contact the [support][support].

## Bad request (`400`)

Code: `A0003`

Your request couldn't be processed. Please check the relevant documentation,

base URL, endpoint, `Content-Type` header, required fields, field format

(e.g. UUIDs) and try again.

## Invalid token (`403`)

Code: `A0004`

The provided token was incomplete or not in correct format. Please check the

token or contact the [support][support].

## Invalid user (`403`)

Code: `A0005`

The provided token contains an invalid user. Please check the token or contact

the [support][support].

## Invalid ID (`400`)

Code: `A0006`

The provided ID is in an incorrect format or shape. Please check the

documentation for required ID formatting such as UUID.

## Interaction not found (`404`)

Code: `A0007`

The requested interaction could not be found in our system. Ensure the

interaction exists and you have permission to access it through the listing

endpoint.

## Invalid UUID (`400`)

Code: `A0008`

The provided UUID is in an incorrect format or shape. Please check the

documentation for required ID formatting such as UUID.

## Document not found (`404`)

Code: `A0009`

The requested document could not be found in our system. Ensure the document

exists and you have permission to access it through the listing endpoint.

## Error processing (`500`)

Code: `A0010`

The request could not be performed at the moment. Please try again later or

contact the [support][support].

## Recording not found (`404`)

Code: `A0011`

The requested recording could not be found in our system. Ensure the recording

exists and you have permission to access it through the listing endpoint.

## Transcript not found (`404`)

Code: `A0012`

The requested transcript could not be found in our system. Ensure the transcript

exists and you have permission to access it through the listing endpoint.

## Bad query in URL (`400`)

Code: `A0013`

The query parameters are invalid or incomplete. Please verify them against the

endpoint specification.

## Template section not found (`404`)

Code: `A0014`

The requested template section could not be located in our system. Ensure the

template section exists and you have permission to access it.

## Invalid context structure (`400`)

Code: `A0015`

The specified context structure is invalid. Ensure the request matches the

expected format.

## Limit reached (`400`)

Code: `A0016`

The provided input exceeds the maximum allowed limit. Please provide a

different value or check the endpoint's documentation.

## Duplicate value (`400`)

Code: `A0017`

The value provided already exists. Duplicate entries are not allowed for this

field. Refer to the endpoint specification for the field.

## Unsupported language (`400`)

Code: `A0018`

The requested language is not supported by the endpoint. Please check the

[supported languages][docs-langs] or contact the [support][support].

## Fact group not found (`404`)

Code: `A0019`

The requested fact group was not found in the system. Double-check such a group

exists or contact the [support][support].

## Fact not found (`404`)

Code: `A0020`

The requested fact was not found in the system. Double-check such a group

exists or contact the [support][support].

## Insufficient balance (`429`)

Code: `A0021`

You're currently in limited access mode. To unlock full API functionality, please add credits to continue using the API. For assistance, contact [support][support].

## Provided audio is invalid (`400`)

Code: `A0022`

Provided audio is invalid or corrupted. Please check the audio format and try again. For assistance or more information, contact [support][support].

## Invalid scope (`403`)

Code: `A0023`

The provided token does have a forbidden scope for this service. Please check the token scopes or contact the [support][support].

# Help Center

Source: https://docs.corti.ai/about/help

# Introduction to the Corti API

Source: https://docs.corti.ai/about/introduction

Overview of Corti application programming interfaces (API)

Corti is the all-in-one AI stack for healthcare, built for medical accuracy, compliance, and scale. Healthcare's complex language and specialized knowledge demands purpose-built AI infrastructure.

The Corti AI clinical speech understanding, LLMs, and agentic automation are delivered through a single platform that makes it easy to embed AI directly into healthcare workflows. From documentation and coding to billing and referrals, boosting care and fostering provider wellbeing, Corti enables product teams surface critical insights, improve patient outcomes, and ship faster with less effort.

Corti's goal is to be the most complete and accurate **AI infrastructure platform for healthcare developers** building products that demand medical-grade reasoning and enterprise reliability, without any compromises on integration speed or regulatory compliance.

Learn more about what makes the Corti AI platform the right choice for developers and healthcare organizations building the next generation of clinical applications.

***

## Why Choose the Corti API?

| | |

| :-------------------------------- | :-------------------------------------------------------------------------------------------------------------------------------------------------------- |

| **Purpose-built for healthcare** | Optimized for the unique needs and compliance standards of the medical field. |

| **Real-time processing** | Live data streaming with highly accurate fact generation enables instantaneous AI-driven support to integrated applications and healthcare professionals. |

| **Seamless workflow integration** | Designed to work across multiple modalities within clinical and operational workflows. |

| **Customizable and scalable** | Robust and adaptable capabilities to fit your organizational needs. |

Bespoke API integrations

SDKs and web components

Embeddable UI elements

Medical language proficiency

Secure and compliant

Real-time or asynchronous

***

## Integrate seamlessly

Corti AI can be integrated via the Corti API, allowing organizations to build bespoke AI-powered solutions that meet their specific needs. The same API powers [Corti Assistant](/assistant/welcome) - a fully packaged, EHR agnostic, real-time ambient AI scribe that automates documentation, information lookup, medical coding and more. If, however, you want to embed Corti AI into your workflow or customize the interactions, then take a deeper dive into the API documentation.

Corti’s **model network and orchestration layer** for Text and Audio, powering Speech to Text, Text Generation, and Agent capabilities.

The power of reasoning and contextual inference unlocks critical functionality to power healthcare workflows.

Corti’s **model network and orchestration layer** for Text and Audio, powering Speech to Text, Text Generation, and Agent capabilities.

The power of reasoning and contextual inference unlocks critical functionality to power healthcare workflows.

With the Corti API you can build any speech-enabled or text-based workflow for healthcare.

The capabilities of the Corti AI platform can be accessed directly via the API or with the help of SDKs, Web Components, and embeddable applications (as desired).

With the Corti API you can build any speech-enabled or text-based workflow for healthcare.

The capabilities of the Corti AI platform can be accessed directly via the API or with the help of SDKs, Web Components, and embeddable applications (as desired).

***

## Core Capabilities

***

## Core Capabilities

***

## Bringing it All Together

This documentation site outlines how to use the API and provides example workflows.

* Continue on to [How it works](/about/how_it_works) to learn more about the system architecture.

* Documentation pages [welcome](/about/welcome) you to the API and provide explanations of core capabilities.

* The [API Console](/get_started/getaccess/) to create an account and create client credentials so you can begin your journey.

* The [Javascript SDK](/quickstart/javascript-sdk/) page walks through how to get stated quickly with Corti API.

* The [API Reference](/api-reference/welcome) page provides detailed documentation for each available endpoint.

* The [Resources](/about/resources/) page provides release notes and other useful resources.

***

## Bringing it All Together

This documentation site outlines how to use the API and provides example workflows.

* Continue on to [How it works](/about/how_it_works) to learn more about the system architecture.

* Documentation pages [welcome](/about/welcome) you to the API and provide explanations of core capabilities.

* The [API Console](/get_started/getaccess/) to create an account and create client credentials so you can begin your journey.

* The [Javascript SDK](/quickstart/javascript-sdk/) page walks through how to get stated quickly with Corti API.

* The [API Reference](/api-reference/welcome) page provides detailed documentation for each available endpoint.

* The [Resources](/about/resources/) page provides release notes and other useful resources.

If you have any questions about how to implement Corti AI in your healthcare environment or application, then [contact us](https://help.corti.app) for more information.

# Languages

Source: https://docs.corti.ai/about/languages

Learn about how languages are supported in Corti APIs

Corti speech to text and text generation are specifically designed for use in the healthcare domain. Speech to text (STT) language models are designed to balance recognition speed, performance, and accuracy. Text generation LLMs accept various inputs depending on the workflow (e.g., transcripts or facts) and have defined guardrails to support quality assurance of facts and documents outputs.

The `language codes` listed below are used in API requests to define output language for speech to text and document generation.

* Learn more about speech to text endpoints [here](/stt/overview).

* Learn how to query the API for document templates available by language [here](/textgen/templates#retrieving-available-templates).

***

## Speech to Text Performance Tiers

Corti speech to text uses a tier system to categorize functionality and performance that is available per language:

| Tier | Description | Medical Terminology Validation |

| :----------- | :--------------------------------------------------------------------------------------------------------------- | :----------------------------------------------------------------------------------------------------------------------------------------------------------------------------: |

| **Base** | AI-powered speech recognition, ready to integrate with healthcare IT solutions | `Up to 1,000` |

| **Enhanced** | Base plus optimized medical vocabulary for a variety of specialties and improved support for real-time dictation | `1,000-99,999` |

| **Premier** | Enhanced plus speech to text models delivering the best performance in terms of accuracy, quality, and latency | `100,000+` |

***

## Language Availability per Endpoint

The table below summarizes languages supported by the Corti API and how they can be used with speech to text endpoints (`Transcribe`, `Stream`, and `Transcripts`) and text generation endpoints (`Documents`):

| Language | Language Code | ASR Performance | [Transcribe](/api-reference/transcribe) | [Stream](/api-reference/stream) | [Transcripts](/api-reference/transcripts/create-transcript) | [Documents1](/api-reference/documents/generate-document) |

| :---------------- | :------------------: | :-----------------------------------------------------------------------------------------------------------------------------: | :----------------------------------------------------------------------------------: | :----------------------------------------------------------------------------------: | :--------------------------------------------------------------------------------------------------------------: | :-------------------------------------------------------------------------------------------------------------: |

| Arabic | `ar` | Base | | | | |

| Danish | `da` | Premier | | | 2 | |

| Dutch | `nl` | Enhanced | | | | |

| English (US) | `en` or `en-US` | Premier | | | 2 | |

| English (UK) | `en-GB` | Premier | | | 2 | |

| French | `fr` | Premier | | | 2 | |

| German | `de` | Premier | | | 2 | |

| Hungarian | `hu` | Enhanced | | | | 5 |

| Italian | `it` | Base | | | | |

| Norwegian | `no` | Enhanced | | | | |

| Portuguese | `pt` | Base | | | | |

| Spanish | `es` | Base | | | | |

| Swedish | `sv` | Enhanced | | | | |

| Swiss German | `gsw-CH`3 | Enhanced | | 4 | 2 | |

| Swiss High German | `de-CH`3 | Premier | | 4 | 2 | |

**Notes:**

1 Use the language codes listed above for the `outputLanguage` parameter in `POST/documents` requests. Template(s) or section(s) in the defined output must be available for successful document generation.

2 Speech to text accuracy for async audio file processing via `/transcripts` endpoint may be degraded as compared to real-time recognition via the `/transcribe` and `/stream` endpoints. Further model updates are in progress to address the performance limitation.

3 Use language code `gsw-CH` for dialectical Swiss German workflows (e.g., conversational AI scribing), and language code `de-CH` when Swiss High German is spoken (e.g., dictation).

4 For Swiss German `/stream` configuration: Use `gsw-CH` for `primaryLanguage` as you transcribe dialectical spoken to written Swiss High German, and use `de-CH` for the facts `outputLanguage`.

5 Hungarian document generation via default templates upon request.

***

## Languages Available for Exploration

The table below summarizes languages that, upon request, can enabled with `base` tier functionality and performance.

Corti values the opportunity to expand to new markets, but we need your collaboration and partnership in speech-to-text validation and functionality refinement.

Please [contact us](https://help.corti.app) to discuss further.

| Language | Language Code |

| :--------- | :-----------: |

| Bulgarian | `bg` |

| Croatian | `hr` |

| Czech | `cs` |

| Estonian | `et` |

| Finnish | `fi` |

| Greek | `el` |

| Hebrew | `he` |

| Japanese | `ja` |

| Latvian | `lv` |

| Lithuanian | `lt` |

| Maltese | `mt` |

| Mandarin | `cmn` |

| Polish | `pl` |

| Romanian | `ro` |

| Russian | `ru` |

| Slovakian | `sk` |

| Slovenian | `sl` |

| Ukrainian | `uk` |

***

## Language Translation

* Translation (audio capture in one language with transcript output in a different language) is not officially supported in the Corti API at this time.

* Some general support for translation of `transcripts` in English to `facts` in other languages (e.g. German, French, Danish, etc.) is available in [stream](/textgen/facts_realtime#using-the-api) or [extract Facts](/api-reference/facts/extract-facts) requests.

* Additional translation language-pair combinations are not quality assessed or performance benchmarked.

Please [contact us](https://help.corti.app) if you are interested in a language that is not listed here, need help with tiers and endpoint definitions, or have questions about how to use language codes in API requests.

# Public Roadmap

Source: https://docs.corti.ai/about/roadmap

# A2A Protocol (Agent-to-Agent)

Source: https://docs.corti.ai/agentic/a2a-protocol

Learn about the Agent-to-Agent protocol for inter-agent communication

### What is the A2A Protocol

The **Agent-to-Agent (A2A)** protocol is an open standard that enables secure, framework-agnostic communication between autonomous AI agents. Instead of building bespoke integrations whenever you want agents to collaborate, A2A gives Corti-Agentic and other systems a **common language** agents can use to discover, talk to, and delegate work to one another.

For the full technical specification, see the official A2A project docs at [a2a-protocol.org](https://a2a-protocol.org/latest/).

Originally developed by Google and now stewarded under the Linux Foundation, A2A solves a core problem in multi-agent systems: interoperability across ecosystems, languages, and vendors. It lets you connect agents built in Python, JavaScript, Java, Go, .NET, or other languages and have them cooperate on complex workflows without exposing internal agent state or proprietary logic.

### Why Corti-Agentic Uses A2A

We chose A2A because it:

* **Standardizes agent communication.** Agents can talk to each other without siloed, point-to-point integrations. That makes composite workflows easier to build and maintain.

* **Supports real workflows.** A2A includes discovery, task negotiation, and streaming updates, so agents can coordinate long-running or multi-step jobs.

* **Preserves security and opacity.** Agents exchange structured messages without sharing internal memory or tools. That protects intellectual property and keeps interactions predictable.

* **Leverages open tooling.** There are open source SDKs in multiple languages and example implementations you can reuse.

In Corti-Agentic, A2A is the backbone for agent collaboration. Whether you’re orchestrating specialist agents, chaining reasoning tasks, or integrating external agent services, A2A gives you a robust, open foundation you don’t have to reinvent.

### Open Source SDKs and Tooling

For links to Corti’s official SDK and the official A2A project SDKs (Python, JavaScript/TypeScript, Java, Go, and .NET), see **[SDKs & Integrations](/agentic/sdks-integrations)**.

Please [contact us](https://help.corti.app/) if you need more information about the Corti Agentic Framework.

# Create Agent

Source: https://docs.corti.ai/agentic/agents/create-agent

agentic/auto-generated-openapi.yml post /agents

This endpoint allows the creation of a new agent that can be utilized in the `POST /agents/{id}/v1/message:send` endpoint.

# Delete Agent by ID

Source: https://docs.corti.ai/agentic/agents/delete-agent-by-id

agentic/auto-generated-openapi.yml delete /agents/{id}

This endpoint deletes an agent by its identifier. Once deleted, the agent can no longer be used in threads.

# Get Agent by ID

Source: https://docs.corti.ai/agentic/agents/get-agent-by-id

agentic/auto-generated-openapi.yml get /agents/{id}

This endpoint retrieves an agent by its identifier. The agent contains information about its capabilities and the experts it can call.

# Get Agent Card

Source: https://docs.corti.ai/agentic/agents/get-agent-card

agentic/auto-generated-openapi.yml get /agents/{id}/agent-card.json

This endpoint retrieves the agent card in JSON format, which provides metadata about the agent, including its name, description, and the experts it can call.

# Get Context by ID

Source: https://docs.corti.ai/agentic/agents/get-context-by-id

agentic/auto-generated-openapi.yml get /agents/{id}/v1/contexts/{contextId}

This endpoint retrieves all tasks and top-level messages associated with a specific context for the given agent.

# Get Task by ID

Source: https://docs.corti.ai/agentic/agents/get-task-by-id

agentic/auto-generated-openapi.yml get /agents/{id}/v1/tasks/{taskId}

This endpoint retrieves the status and details of a specific task associated with the given agent. It provides information about the task's current state, history, and any artifacts produced during its execution.

# List Agents

Source: https://docs.corti.ai/agentic/agents/list-agents

agentic/auto-generated-openapi.yml get /agents

This endpoint retrieves a list of all agents that can be called by the Corti Agent Framework.

# List Registry Experts

Source: https://docs.corti.ai/agentic/agents/list-registry-experts

agentic/auto-generated-openapi.yml get /agents/registry/experts

This endpoint retrieves the experts registry, which contains information about all available experts that can be referenced when creating agents through the AgentsExpertReference schema.

# Send Message to Agent

Source: https://docs.corti.ai/agentic/agents/send-message-to-agent

agentic/auto-generated-openapi.yml post /agents/{id}/v1/message:send

This endpoint sends a message to the specified agent to start or continue a task. The agent processes the message and returns a response. If the message contains a task ID that matches an ongoing task, the agent will continue that task; otherwise, it will start a new task.

# Update Agent by ID

Source: https://docs.corti.ai/agentic/agents/update-agent-by-id

agentic/auto-generated-openapi.yml patch /agents/{id}

This endpoint updates an existing agent. Only the fields provided in the request body will be updated; other fields will remain unchanged.

# System Architecture

Source: https://docs.corti.ai/agentic/architecture

Learn about the Agentic Framework system architecture

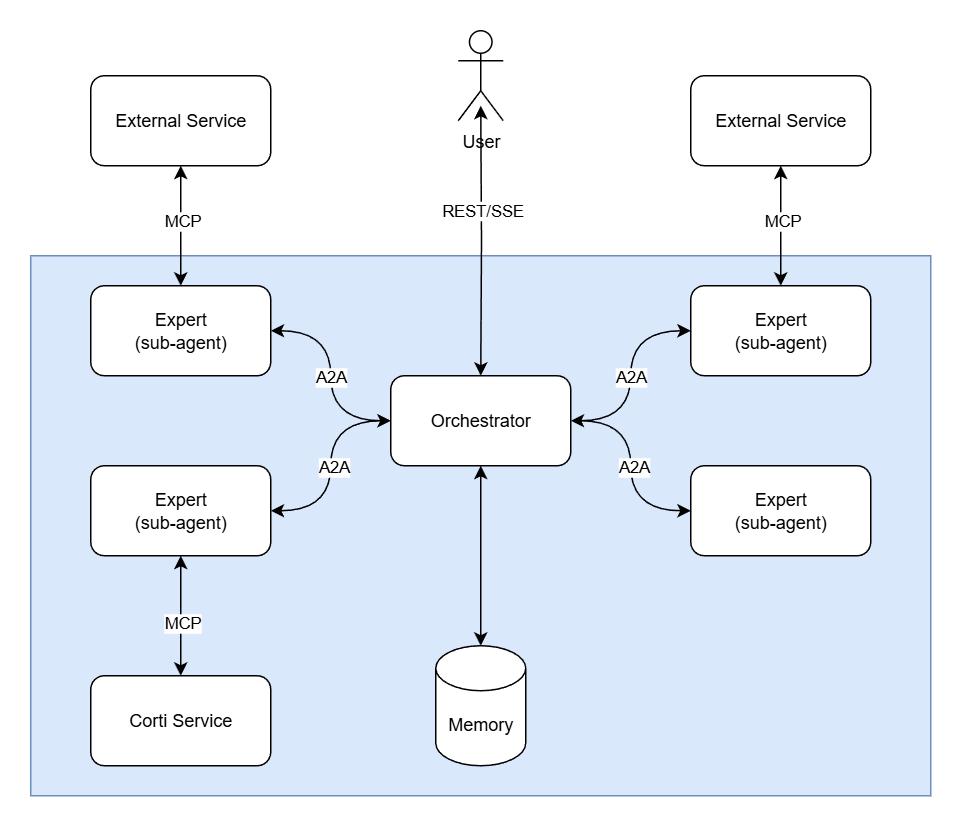

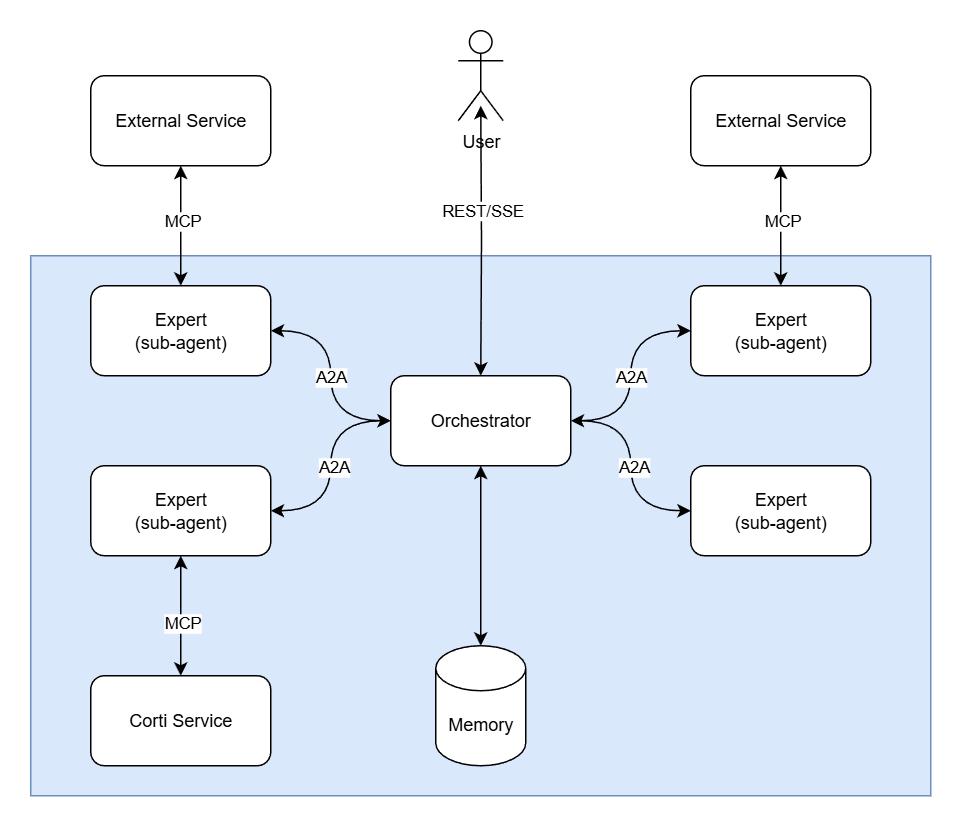

The Corti Agentic Framework adopts a **multi-agent architecture** to power development of healthcare AI solutions. As compared to a monolithic LLM, the Corti Agentic Framework allows for improved specialization and protocol-based composition.

## Architecture Components

The architecture consists of three core components working together:

* **[Orchestrator](/agentic/orchestrator)** — The central coordinator that receives user requests and delegates tasks to specialized Experts via the A2A protocol.

* **[Experts](/agentic/experts)** — Specialized sub-agents that perform domain-specific work, potentially calling external services through MCP.

* **[Memory](/agentic/context-memory)** — Maintains persistent context and state, enabling the Orchestrator to make informed decisions and ensuring continuity across conversations.

Together, this architecture enables complex workflows through protocol-based composition while maintaining strict data isolation and stateless reasoning agents.

## Interaction mechanisms in Corti

The A2A Protocol supports various interaction patterns to accommodate different needs for responsiveness and persistence. Corti builds on these patterns so you can choose the right interaction model for your product:

* **Request/Response (Polling)**: Used for many synchronous Corti APIs where you send input and wait for a single response. For long‑running Corti tasks, your client can poll the task endpoint for status and results.

* **Streaming with Server-Sent Events (SSE)**: Used by Corti for real‑time experiences (for example, ambient notes or live guidance). Your client opens an SSE stream to receive incremental tokens, events, or status updates over an open HTTP connection.

The architecture consists of three core components working together:

* **[Orchestrator](/agentic/orchestrator)** — The central coordinator that receives user requests and delegates tasks to specialized Experts via the A2A protocol.

* **[Experts](/agentic/experts)** — Specialized sub-agents that perform domain-specific work, potentially calling external services through MCP.

* **[Memory](/agentic/context-memory)** — Maintains persistent context and state, enabling the Orchestrator to make informed decisions and ensuring continuity across conversations.

Together, this architecture enables complex workflows through protocol-based composition while maintaining strict data isolation and stateless reasoning agents.

## Interaction mechanisms in Corti

The A2A Protocol supports various interaction patterns to accommodate different needs for responsiveness and persistence. Corti builds on these patterns so you can choose the right interaction model for your product:

* **Request/Response (Polling)**: Used for many synchronous Corti APIs where you send input and wait for a single response. For long‑running Corti tasks, your client can poll the task endpoint for status and results.

* **Streaming with Server-Sent Events (SSE)**: Used by Corti for real‑time experiences (for example, ambient notes or live guidance). Your client opens an SSE stream to receive incremental tokens, events, or status updates over an open HTTP connection.

Please [contact us](https://help.corti.app/) if you need more information about the Corti Agentic Framework.

# Beginners' Guide to Agents

Source: https://docs.corti.ai/agentic/beginners-guide

How LLM agents work in the Corti Agentic Framework

export const Lottie = ({path, width = '100%', maxWidth = '800px', height = 'auto', loop = true, autoplay = true}) => {

const containerRef = useRef(null);

const animationRef = useRef(null);

const scriptRef = useRef(null);

const propsRef = useRef({

path,

loop,

autoplay

});

useEffect(() => {

propsRef.current = {

path,

loop,

autoplay

};

}, [path, loop, autoplay]);

const initializeAnimation = () => {

if (!window.lottie || !containerRef.current) {

return;

}

if (animationRef.current) {

animationRef.current.destroy();

animationRef.current = null;

}

const {path, loop, autoplay} = propsRef.current;

animationRef.current = window.lottie.loadAnimation({

container: containerRef.current,

renderer: 'svg',

loop: loop,

autoplay: autoplay,

path: path

});

};

useEffect(() => {

if (window.lottie) {

initializeAnimation();

return;

}

const existingScript = document.querySelector('script[src="https://cdnjs.cloudflare.com/ajax/libs/bodymovin/5.12.2/lottie.min.js"]');

if (existingScript) {

existingScript.addEventListener('load', initializeAnimation);

return () => {

existingScript.removeEventListener('load', initializeAnimation);

};

}

const script = document.createElement('script');

script.src = 'https://cdnjs.cloudflare.com/ajax/libs/bodymovin/5.12.2/lottie.min.js';

script.async = true;

scriptRef.current = script;

script.onload = initializeAnimation;

document.body.appendChild(script);

return () => {

if (scriptRef.current && document.body.contains(scriptRef.current)) {

document.body.removeChild(scriptRef.current);

scriptRef.current = null;

}

};

}, []);

useEffect(() => {

if (window.lottie) {

initializeAnimation();

}

return () => {

if (animationRef.current) {

animationRef.current.destroy();

animationRef.current = null;

}

};

}, [path, loop, autoplay]);

return ;

};

In healthcare, an **LLM agent** is not a chatbot trying to answer everything on its own. The language model is used primarily for reasoning and planning, understanding a request, breaking it down, and deciding which experts, tools, or data sources are best suited to handle each part of the task.

Instead of relying on internal knowledge, agents retrieve information from trusted external knowledge bases, clinical systems, and customer-owned data at runtime. When appropriate, they can also take controlled actions, such as writing structured data back to an EHR, triggering downstream workflows, or sending information to other systems.

The **Corti Agentic Framework** is the healthcare-grade platform that makes this possible in production. It provides the orchestration layer that allows agents to delegate work to specialized experts, operate within strict safety and governance boundaries, and remain fully auditable. This enables AI systems that can reason, look things up, and act, without guessing or bypassing clinical control.

# Context & Memory

Source: https://docs.corti.ai/agentic/context-memory

Learn how context and memory work in the Corti Agentic Framework

A **context** in the Corti Agentic Framework makes use of memory from previous text and data in the conversation so far—think of it as a thread that maintains conversation history. Understanding how context works is essential for building effective integrations that maintain continuity across multiple messages.

## What is Context?

A `Context` (identified by a server-generated `contextId`) is a logical grouping of related `Messages`, `Tasks`, and `Artifacts`, providing context across a multi-turn "conversation"". It enables you to associate multiple tasks and agents with a single patient encounter, call, or workflow, ensuring continuity and proper scoping of shared knowledge throughout.

The `contextId` is **always created on the server**. You never generate it client-side. This ensures proper state management and prevents conflicts.

### Data Isolation and Scoping

**Contexts provide strict data isolation**: Data can **NEVER** leak across contexts. Each `contextId` creates a completely isolated conversation scope. Messages, tasks, artifacts, and any data within one context are completely inaccessible to agents working in a different context. This ensures:

* **Privacy and security**: Patient data from one encounter cannot accidentally be exposed to another encounter

* **Data integrity**: Information from different workflows remains properly separated

* **Compliance**: You can confidently scope sensitive data to specific contexts without risk of cross-contamination

When you need to share information across contexts, you must explicitly pass it via `DataPart` objects in your messages—there is no automatic data sharing between contexts.

## Using Context for Automatic Memory Management

The simplest way to use context is to let the framework automatically manage conversation memory:

### Workflow Pattern

1. **First message**: Send your message **without** a `contextId`. The server will create a new context automatically.

2. **Response**: The server's response includes the newly created `contextId`.

3. **Subsequent messages**: Include that `contextId` in your requests. Memory from previous messages in that context is automatically managed and available to the agent.

When you include a `contextId` in your request, the agent has access to all previous messages, artifacts, and state within **that specific context only**. Data from other contexts is completely isolated and inaccessible. This enables natural, continuous conversations without manually passing history, while maintaining strict data boundaries between different encounters or workflows.

### Standalone Requests

If you don't want automatic memory management, always send messages **without** a `contextId`. Each message will then be treated as a standalone request without access to prior conversation history. This is useful for:

* One-off queries that don't depend on prior context

* Testing and debugging individual requests

* Scenarios where you want explicit control over what context is included

## Passing Additional Context with Each Request

In addition to automatic memory management via `contextId`, you can pass additional context in each request by including `DataPart` objects in your message. This is useful when you want to provide specific structured data, summaries, or other context that should be considered for that particular request.

```json theme={null}

{

"contextId": "ctx_abc123",

"messages": [

{

"role": "user",

"parts": [

{

"type": "text",

"text": "Generate a summary of this patient encounter"

},

{

"type": "data",

"data": {

"patientId": "pat_12345",

"encounterDate": "2025-12-15",

"chiefComplaint": "Chest pain",

"vitalSigns": {

"bloodPressure": "120/80",

"heartRate": 72,

"temperature": 98.6

}

}

}

]

}

]

}

```

This approach allows you to:

* Provide structured data (patient records, clinical facts, etc.) alongside text

* Include summaries or distilled information from external sources

* Pass metadata or configuration that should be considered for this specific request

* Combine automatic memory (via `contextId`) with explicit context (via `DataPart`)

## How Memory Works

The Corti Agentic Framework uses an intelligent memory system that automatically indexes and stores all content within a context, enabling semantic retrieval when needed.

### Automatic Indexing

Every `TextPart` and `DataPart` you send in messages is automatically indexed and stored in the context's memory. This includes:

* Text content from user and agent messages

* Structured data from `DataPart` objects (patient records, clinical facts, metadata, etc.)

* Artifacts generated by tasks

* Any other content that flows through the context

### Semantic Retrieval

The memory system operates like a RAG (Retrieval Augmented Generation) pipeline. When an agent processes a new message:

1. **Semantic search**: The system performs semantic search across all indexed content in the context's memory

2. **Relevant retrieval**: It retrieves the most semantically relevant information based on the current query or task

3. **Just-in-time injection**: This relevant context is automatically injected into the agent's prompt, ensuring it has access to the right information at the right time

This means you don't need to manually pass all relevant history with each request—the system intelligently retrieves what's needed based on semantic similarity. For example, if you ask "What was the patient's chief complaint?" in a later message, the system will automatically retrieve and include the relevant information from earlier in the conversation, even if it was mentioned many messages ago.

### Benefits

* **Efficient**: Only relevant information is retrieved and used, reducing token usage

* **Automatic**: No need to manually manage what context to include

* **Semantic**: Works based on meaning, not just keyword matching

* **Comprehensive**: All content in the context is searchable and retrievable

## Context vs. Reference Task IDs

The framework provides two mechanisms for linking related work:

* **`contextId`** – Groups multiple related `Messages`, `Tasks`, and `Artifacts` together (think of it as the encounter/call/workflow bucket). This provides automatic memory management and is sufficient for most use cases.

* **`referenceTaskIds`** – An optional list of specific past `Task` IDs within the same context that should be treated as explicit inputs or background. Note that `referenceTaskIds` are scoped to a context—they reference tasks within the same `contextId`.

**In most situations, you can ignore `referenceTaskIds`** since the automatic memory provided by `contextId` is sufficient. Only use `referenceTaskIds` when you need to explicitly direct the agent to pay attention to specific tasks or artifacts within the context, such as in complex multi-step workflows where you want to ensure certain outputs are prioritized.

## Context and Interaction IDs

If you're using contexts alongside Corti's internal interaction representation (for example, when integrating with Corti Assistant or other Corti products that use `interactionId`), note that **these two concepts are currently not linked**.

* **`contextId`** (from the Agentic Framework) and **`interactionId`** (from Corti's internal systems) are separate concepts that you will need to map yourself in your application.

* There is no automatic association between a Corti `interactionId` and an Agentic Framework `contextId`.

**Recommended approach:**

* **Use a fresh context per interaction**: When working with a Corti interaction, create a new `contextId` for that interaction. This keeps data properly scoped and isolated per interaction.

* Store the mapping between your `interactionId` and `contextId`(s) in your own application state or metadata.

* If you need to share data across multiple contexts within the same interaction, explicitly pass it via `DataPart` objects.

We're looking into ways to make the relationship between interactions and contexts more ergonomic if this is relevant to your use case. For now, maintaining your own mapping and using one context per interaction is the recommended pattern.

For more details on how context relates to other core concepts, see [Core Concepts](/agentic/core-concepts).

Please [contact us](https://help.corti.app/) if you need more information about context and memory in the Corti Agentic Framework.

# Core Concepts

Source: https://docs.corti.ai/agentic/core-concepts

Learn the fundamental building blocks of the Corti Agentic Framework

This page adds Corti-specific detail on top of the core A2A concepts. We have tried to adhere as closely as possible to the intended A2A protocol specification — for the canonical definition of these concepts, see the A2A documentation on [Core Concepts and Components in A2A](https://a2a-protocol.org/latest/topics/key-concepts).

The Corti Agentic Framework uses a set of core concepts that define how Corti agents, tools, and external systems interact. Understanding these building blocks is essential for developing on the Corti platform and for integrating your own systems using the A2A Protocol.

## Core Actors

At Corti, these actors typically map to concrete products and integrations:

* **User**: A clinician, contact-center agent, knowledge worker, or an automated service in your environment. The user initiates a request (for example, “summarize this consultation” or “triage this patient”) that requires assistance from one or more Corti-powered agents.

* **A2A Client (Client Agent)**: The application that calls Corti. This is your application/server. The client initiates communication using the A2A Protocol and orchestrates how results are used in your product.

* **A2A Server (Remote Agent)**: A Corti agent or agentic system that exposes an HTTP endpoint implementing the A2A Protocol. It receives requests from clients, processes tasks, and returns results or status updates.

## Fundamental Communication Elements

The following elements are fundamental to A2A communication and how Corti uses them:

A JSON metadata document describing an agent's identity, capabilities, endpoint, skills, and authentication requirements.

**Key Purpose:** Enables Corti and your applications to discover agents and understand how to call them securely and effectively.

A stateful unit of work initiated by an agent, with a unique ID and defined lifecycle.

**Key Purpose:** Powers long‑running operations in Corti (for example, document generation or multi‑step workflows) and enables tracking and collaboration.

A single turn of communication between a client and an agent, containing content and a role ("user" or "agent").

**Key Purpose:** Carries instructions, clinical context, user questions, and agent responses between your application, Corti Assistant, and remote agents.

The fundamental content container (for example, TextPart, FilePart, DataPart) used within Messages and Artifacts.

**Key Purpose:** Lets Corti exchange text, audio transcripts, structured JSON, and files in a consistent way across agents and tools.

A tangible output generated by an agent during a task (for example, a document, image, or structured data).

**Key Purpose:** Represents concrete Corti results such as SOAP notes, call summaries, recommendations, or other structured outputs.

A server-generated identifier (`contextId`) that logically groups multiple related `Task` objects, providing context across a series of interactions.

**Key Purpose:** Enables you to associate multiple tasks and agents with a single patient encounter, call, or workflow, ensuring continuity and proper scoping of shared knowledge throughout an interaction.

## Agent Cards in Corti

The Agent Card is a JSON document that serves as a digital business card for initial discovery and interaction setup. It provides essential metadata about an agent. Clients parse this information to determine if an agent is suitable for a given task, how to structure requests, and how to communicate securely. Key information includes identity, service endpoint (URL), A2A capabilities, authentication requirements, and a list of skills.

Within Corti, Agent Cards are how you:

* Discover first‑party Corti agents and their capabilities.

* Register and describe your own remote agents so Corti workflows can call them.

* Declare authentication and compliance requirements up front, before any PHI or sensitive data is exchanged.

## Messages and Parts in Corti

A message represents a single turn of communication between a client and an agent. It includes a role ("user" or "agent") and a unique `messageId`. It contains one or more Part objects, which are granular containers for the actual content. This design allows A2A to be modality independent and lets Corti mix clinical text, transcripts, and structured data safely in a single exchange.

The primary part kinds are:

* `TextPart`: Contains plain textual content, such as instructions, questions, or generated notes.

* `DataPart`: Carries structured JSON data. This is useful for clinical facts, workflow parameters, EHR identifiers, or any machine‑readable information you exchange with Corti.

* `FilePart`: Represents a file (for example, a PDF discharge letter or an audio recording). It can be transmitted either inline (Base64 encoded) or through a URI. It includes metadata like "name" and "mimeType". This is not yet fully supported.

## Artifacts in Corti

An artifact represents a tangible output or a concrete result generated by a remote agent during task processing. Unlike general messages, artifacts are the actual deliverables. An artifact has a unique `artifactId`, a human-readable name, and consists of one or more part objects. Artifacts are closely tied to the task lifecycle and can be streamed incrementally to the client.

In Corti, artifacts typically correspond to business outputs such as:

* Clinical notes (for example, SOAP notes, discharge summaries).

* Extracted clinical facts or coding suggestions.

* Generated documents, checklists, or other workflow‑specific artifacts.

## Agent response: Task or Message

The agent response can be a new `Task` (when the agent needs to perform a long-running operation) or a `Message` (when the agent can respond immediately).

On the Corti platform this means:

* For quick operations (for example, a short completion or a classification), your agent often responds with a `Message`.

* For longer workflows (for example, generating a full clinical document, coordinating multiple tools, or waiting on downstream systems), your agent responds with a `Task` that you can monitor and later retrieve artifacts from.

# Experts

Source: https://docs.corti.ai/agentic/experts

Learn about Experts available for use with the AI Agent

An **Expert** is an LLM-powered capability that an AI agent can utilize. Experts are designed to complete small, discrete tasks efficiently, enabling the Orchestrator to compose complex workflows by chaining multiple experts together.

## What is Context?

A `Context` (identified by a server-generated `contextId`) is a logical grouping of related `Messages`, `Tasks`, and `Artifacts`, providing context across a multi-turn "conversation"". It enables you to associate multiple tasks and agents with a single patient encounter, call, or workflow, ensuring continuity and proper scoping of shared knowledge throughout.

The `contextId` is **always created on the server**. You never generate it client-side. This ensures proper state management and prevents conflicts.

### Data Isolation and Scoping

**Contexts provide strict data isolation**: Data can **NEVER** leak across contexts. Each `contextId` creates a completely isolated conversation scope. Messages, tasks, artifacts, and any data within one context are completely inaccessible to agents working in a different context. This ensures:

* **Privacy and security**: Patient data from one encounter cannot accidentally be exposed to another encounter

* **Data integrity**: Information from different workflows remains properly separated

* **Compliance**: You can confidently scope sensitive data to specific contexts without risk of cross-contamination

When you need to share information across contexts, you must explicitly pass it via `DataPart` objects in your messages—there is no automatic data sharing between contexts.

## Using Context for Automatic Memory Management

The simplest way to use context is to let the framework automatically manage conversation memory:

### Workflow Pattern

1. **First message**: Send your message **without** a `contextId`. The server will create a new context automatically.

2. **Response**: The server's response includes the newly created `contextId`.

3. **Subsequent messages**: Include that `contextId` in your requests. Memory from previous messages in that context is automatically managed and available to the agent.

When you include a `contextId` in your request, the agent has access to all previous messages, artifacts, and state within **that specific context only**. Data from other contexts is completely isolated and inaccessible. This enables natural, continuous conversations without manually passing history, while maintaining strict data boundaries between different encounters or workflows.

### Standalone Requests

If you don't want automatic memory management, always send messages **without** a `contextId`. Each message will then be treated as a standalone request without access to prior conversation history. This is useful for:

* One-off queries that don't depend on prior context

* Testing and debugging individual requests

* Scenarios where you want explicit control over what context is included

## Passing Additional Context with Each Request

In addition to automatic memory management via `contextId`, you can pass additional context in each request by including `DataPart` objects in your message. This is useful when you want to provide specific structured data, summaries, or other context that should be considered for that particular request.

```json theme={null}

{

"contextId": "ctx_abc123",

"messages": [

{

"role": "user",

"parts": [

{

"type": "text",

"text": "Generate a summary of this patient encounter"

},

{

"type": "data",

"data": {

"patientId": "pat_12345",

"encounterDate": "2025-12-15",

"chiefComplaint": "Chest pain",

"vitalSigns": {

"bloodPressure": "120/80",

"heartRate": 72,

"temperature": 98.6

}

}

}

]

}

]

}

```

This approach allows you to:

* Provide structured data (patient records, clinical facts, etc.) alongside text

* Include summaries or distilled information from external sources

* Pass metadata or configuration that should be considered for this specific request

* Combine automatic memory (via `contextId`) with explicit context (via `DataPart`)

## How Memory Works

The Corti Agentic Framework uses an intelligent memory system that automatically indexes and stores all content within a context, enabling semantic retrieval when needed.

### Automatic Indexing

Every `TextPart` and `DataPart` you send in messages is automatically indexed and stored in the context's memory. This includes:

* Text content from user and agent messages

* Structured data from `DataPart` objects (patient records, clinical facts, metadata, etc.)

* Artifacts generated by tasks

* Any other content that flows through the context

### Semantic Retrieval

The memory system operates like a RAG (Retrieval Augmented Generation) pipeline. When an agent processes a new message:

1. **Semantic search**: The system performs semantic search across all indexed content in the context's memory

2. **Relevant retrieval**: It retrieves the most semantically relevant information based on the current query or task

3. **Just-in-time injection**: This relevant context is automatically injected into the agent's prompt, ensuring it has access to the right information at the right time

This means you don't need to manually pass all relevant history with each request—the system intelligently retrieves what's needed based on semantic similarity. For example, if you ask "What was the patient's chief complaint?" in a later message, the system will automatically retrieve and include the relevant information from earlier in the conversation, even if it was mentioned many messages ago.

### Benefits

* **Efficient**: Only relevant information is retrieved and used, reducing token usage

* **Automatic**: No need to manually manage what context to include

* **Semantic**: Works based on meaning, not just keyword matching

* **Comprehensive**: All content in the context is searchable and retrievable

## Context vs. Reference Task IDs

The framework provides two mechanisms for linking related work:

* **`contextId`** – Groups multiple related `Messages`, `Tasks`, and `Artifacts` together (think of it as the encounter/call/workflow bucket). This provides automatic memory management and is sufficient for most use cases.

* **`referenceTaskIds`** – An optional list of specific past `Task` IDs within the same context that should be treated as explicit inputs or background. Note that `referenceTaskIds` are scoped to a context—they reference tasks within the same `contextId`.

**In most situations, you can ignore `referenceTaskIds`** since the automatic memory provided by `contextId` is sufficient. Only use `referenceTaskIds` when you need to explicitly direct the agent to pay attention to specific tasks or artifacts within the context, such as in complex multi-step workflows where you want to ensure certain outputs are prioritized.

## Context and Interaction IDs

If you're using contexts alongside Corti's internal interaction representation (for example, when integrating with Corti Assistant or other Corti products that use `interactionId`), note that **these two concepts are currently not linked**.

* **`contextId`** (from the Agentic Framework) and **`interactionId`** (from Corti's internal systems) are separate concepts that you will need to map yourself in your application.

* There is no automatic association between a Corti `interactionId` and an Agentic Framework `contextId`.

**Recommended approach:**

* **Use a fresh context per interaction**: When working with a Corti interaction, create a new `contextId` for that interaction. This keeps data properly scoped and isolated per interaction.

* Store the mapping between your `interactionId` and `contextId`(s) in your own application state or metadata.

* If you need to share data across multiple contexts within the same interaction, explicitly pass it via `DataPart` objects.

We're looking into ways to make the relationship between interactions and contexts more ergonomic if this is relevant to your use case. For now, maintaining your own mapping and using one context per interaction is the recommended pattern.

For more details on how context relates to other core concepts, see [Core Concepts](/agentic/core-concepts).

Please [contact us](https://help.corti.app/) if you need more information about context and memory in the Corti Agentic Framework.

# Core Concepts

Source: https://docs.corti.ai/agentic/core-concepts

Learn the fundamental building blocks of the Corti Agentic Framework

This page adds Corti-specific detail on top of the core A2A concepts. We have tried to adhere as closely as possible to the intended A2A protocol specification — for the canonical definition of these concepts, see the A2A documentation on [Core Concepts and Components in A2A](https://a2a-protocol.org/latest/topics/key-concepts).

The Corti Agentic Framework uses a set of core concepts that define how Corti agents, tools, and external systems interact. Understanding these building blocks is essential for developing on the Corti platform and for integrating your own systems using the A2A Protocol.

## Core Actors

At Corti, these actors typically map to concrete products and integrations:

* **User**: A clinician, contact-center agent, knowledge worker, or an automated service in your environment. The user initiates a request (for example, “summarize this consultation” or “triage this patient”) that requires assistance from one or more Corti-powered agents.

* **A2A Client (Client Agent)**: The application that calls Corti. This is your application/server. The client initiates communication using the A2A Protocol and orchestrates how results are used in your product.

* **A2A Server (Remote Agent)**: A Corti agent or agentic system that exposes an HTTP endpoint implementing the A2A Protocol. It receives requests from clients, processes tasks, and returns results or status updates.

## Fundamental Communication Elements

The following elements are fundamental to A2A communication and how Corti uses them:

A JSON metadata document describing an agent's identity, capabilities, endpoint, skills, and authentication requirements.

**Key Purpose:** Enables Corti and your applications to discover agents and understand how to call them securely and effectively.

A stateful unit of work initiated by an agent, with a unique ID and defined lifecycle.

**Key Purpose:** Powers long‑running operations in Corti (for example, document generation or multi‑step workflows) and enables tracking and collaboration.

A single turn of communication between a client and an agent, containing content and a role ("user" or "agent").

**Key Purpose:** Carries instructions, clinical context, user questions, and agent responses between your application, Corti Assistant, and remote agents.

The fundamental content container (for example, TextPart, FilePart, DataPart) used within Messages and Artifacts.

**Key Purpose:** Lets Corti exchange text, audio transcripts, structured JSON, and files in a consistent way across agents and tools.

A tangible output generated by an agent during a task (for example, a document, image, or structured data).

**Key Purpose:** Represents concrete Corti results such as SOAP notes, call summaries, recommendations, or other structured outputs.

A server-generated identifier (`contextId`) that logically groups multiple related `Task` objects, providing context across a series of interactions.

**Key Purpose:** Enables you to associate multiple tasks and agents with a single patient encounter, call, or workflow, ensuring continuity and proper scoping of shared knowledge throughout an interaction.

## Agent Cards in Corti

The Agent Card is a JSON document that serves as a digital business card for initial discovery and interaction setup. It provides essential metadata about an agent. Clients parse this information to determine if an agent is suitable for a given task, how to structure requests, and how to communicate securely. Key information includes identity, service endpoint (URL), A2A capabilities, authentication requirements, and a list of skills.

Within Corti, Agent Cards are how you:

* Discover first‑party Corti agents and their capabilities.

* Register and describe your own remote agents so Corti workflows can call them.

* Declare authentication and compliance requirements up front, before any PHI or sensitive data is exchanged.

## Messages and Parts in Corti

A message represents a single turn of communication between a client and an agent. It includes a role ("user" or "agent") and a unique `messageId`. It contains one or more Part objects, which are granular containers for the actual content. This design allows A2A to be modality independent and lets Corti mix clinical text, transcripts, and structured data safely in a single exchange.

The primary part kinds are:

* `TextPart`: Contains plain textual content, such as instructions, questions, or generated notes.

* `DataPart`: Carries structured JSON data. This is useful for clinical facts, workflow parameters, EHR identifiers, or any machine‑readable information you exchange with Corti.

* `FilePart`: Represents a file (for example, a PDF discharge letter or an audio recording). It can be transmitted either inline (Base64 encoded) or through a URI. It includes metadata like "name" and "mimeType". This is not yet fully supported.

## Artifacts in Corti

An artifact represents a tangible output or a concrete result generated by a remote agent during task processing. Unlike general messages, artifacts are the actual deliverables. An artifact has a unique `artifactId`, a human-readable name, and consists of one or more part objects. Artifacts are closely tied to the task lifecycle and can be streamed incrementally to the client.

In Corti, artifacts typically correspond to business outputs such as:

* Clinical notes (for example, SOAP notes, discharge summaries).

* Extracted clinical facts or coding suggestions.

* Generated documents, checklists, or other workflow‑specific artifacts.

## Agent response: Task or Message

The agent response can be a new `Task` (when the agent needs to perform a long-running operation) or a `Message` (when the agent can respond immediately).

On the Corti platform this means:

* For quick operations (for example, a short completion or a classification), your agent often responds with a `Message`.

* For longer workflows (for example, generating a full clinical document, coordinating multiple tools, or waiting on downstream systems), your agent responds with a `Task` that you can monitor and later retrieve artifacts from.

# Experts

Source: https://docs.corti.ai/agentic/experts

Learn about Experts available for use with the AI Agent

An **Expert** is an LLM-powered capability that an AI agent can utilize. Experts are designed to complete small, discrete tasks efficiently, enabling the Orchestrator to compose complex workflows by chaining multiple experts together.

## Expert Registry

Corti maintains a **registry of experts** that includes both first-party experts built by Corti and third-party integrations. You can browse and discover available experts through the [Expert Registry API](/agentic/agents/list-agent) endpoint, which returns information about all available experts including their capabilities, descriptions, and configuration requirements.

The registry includes experts for various healthcare use cases such as:

* Clinical reference lookups

* Medical coding

* Document generation

* Data extraction

* And more

## Bring Your Own Expert

You can create custom experts by exposing an MCP (Model Context Protocol) server. When you register your MCP server, Corti wraps it in a custom LLM agent with a system prompt that you can control. This allows you to:

* Integrate your own tools and data sources

* Create domain-specific experts tailored to your workflows

* Maintain control over the expert's behavior through custom system prompts

* Leverage Corti's orchestration and memory management while using your own tools

### Expert Configuration

When creating a custom expert, you provide configuration that includes:

* **Expert metadata**: ID, name, and description

* **System prompt**: Controls how the LLM agent behaves and reasons about tasks

* **MCP server configuration**: Details about your MCP server including transport type, authorization, and connection details

```json Expert Configuration expandable theme={null}

[

{

"type": "expert",

"id": "ecg_interpreter",

"name": "ECG Interpreter",

"description": "Interprets 12 lead ECGs.",

"systemPrompt": "You are an expert ECG interpreter.",

"mcpServers": [

{

"id": "srv1",

"name": "ECG API Svc",

"transportType": "streamable_http",

"authorizationType": "none",

"url": "https://api.ecg.com/x"

}

]

}

]

```

### MCP Server Requirements

Your MCP server must:

* Implement the [Model Context Protocol](https://modelcontextprotocol.io/) specification

* Expose tools via the standard MCP `tools/list` and `tools/call` endpoints

* Handle authentication

Once registered, your custom expert becomes available to the Orchestrator and can be used alongside Corti's built-in experts in multi-expert workflows.

## Multi-Agent Composition

This feature is coming soon.

We're working on exposing A2A (Agent-to-Agent) endpoints that will allow you to attach multiple agents together, enabling more sophisticated multi-agent workflows. This will provide:

* Direct agent-to-agent communication using the A2A protocol

* Composition of complex workflows across multiple agents

* Fine-grained control over agent interactions and data flow

For now, the Orchestrator handles expert composition automatically. When A2A endpoints are available, you'll be able to build custom agent networks while still leveraging Corti's orchestration capabilities.

## Direct Expert Calls

This feature is coming soon.

We're also working on enabling direct calls to experts, allowing you to use them directly in your workflows rather than only through agents. This will provide:

* Direct API access to individual experts

* Integration of experts into custom workflows

* More flexible composition patterns beyond agent-based orchestration

**While AI chat is a useful mechanism, it's not the only option!**

The Corti Agentic Framework is API-first, enabling synchronous or async usage across a range of modalities: scheduled batch jobs, clinical event triggers, UI widgets, and direct EHR system calls.

[Let us know](https://help.corti.app) what types of use cases you're exploring, from doctor-facing chat bots to system-facing automation backends.

Please [contact us](https://help.corti.app/) if you need more information about Experts or creating custom experts in the Corti Agentic Framework.

# Amboss Researcher

Source: https://docs.corti.ai/agentic/experts/amboss-researcher

Learn about how the Amboss Researcher expert works

Agent framework and tools are currently `under development`. API details subject to change ahead of general release.

The **Amboss Researcher** expert provides access to Amboss's comprehensive medical knowledge base, enabling AI agents to retrieve evidence-based clinical information, medical concepts, and educational content.

Amboss Researcher is particularly useful for clinical decision support, medical education, and accessing up-to-date medical knowledge during patient care workflows.

## Capabilities

The Amboss Researcher expert can:

* Search and retrieve medical concepts and clinical information

* Access evidence-based medical content

* Provide structured medical knowledge for clinical workflows

* Support medical education and training scenarios

## Use Cases

* Clinical decision support during patient consultations

* Medical education and training

* Quick reference lookups for medical concepts

* Evidence-based practice support

## Detailed information

The Amboss Researcher expert integrates with Amboss's medical knowledge platform to provide reliable, evidence-based medical information. When invoked by an AI agent, it can search Amboss's database and return structured medical content that can be used to inform clinical decisions or provide educational context.

# ClinicalTrials.gov

Source: https://docs.corti.ai/agentic/experts/clinicaltrials-gov

Learn about how the ClinicalTrials.gov expert works

Agent framework and tools are currently `under development`. API details subject to change ahead of general release.

The **ClinicalTrials.gov** expert enables AI agents to search and retrieve information from ClinicalTrials.gov, the U.S. National Library of Medicine's database of privately and publicly funded clinical studies.

ClinicalTrials.gov is the primary resource for finding ongoing and completed clinical trials, helping connect patients with research opportunities.

## Capabilities

The ClinicalTrials.gov expert can:

* Search ClinicalTrials.gov's database of clinical studies

* Retrieve trial information including eligibility criteria, locations, and status

* Find relevant clinical trials based on medical conditions or interventions

* Access trial protocols and study details

## Use Cases

* Finding relevant clinical trials for patients

* Research study discovery

* Accessing trial protocols and eligibility criteria

* Clinical research support

## Detailed information

The ClinicalTrials.gov expert integrates with the ClinicalTrials.gov database, which contains information about clinical studies conducted around the world. When invoked by an AI agent, it can search for relevant trials based on medical conditions, interventions, or other criteria, helping healthcare providers identify research opportunities for their patients and access detailed trial information.

# DrugBank

Source: https://docs.corti.ai/agentic/experts/drugbank

Learn about how the DrugBank expert works

Agent framework and tools are currently `under development`. API details subject to change ahead of general release.

The **DrugBank** expert provides AI agents with access to DrugBank, a comprehensive database containing detailed drug and drug target information.

DrugBank is an essential resource for drug information, interactions, pharmacology, and medication-related queries.

## Capabilities

The DrugBank expert can:

* Search DrugBank's database for drug information

* Retrieve drug interactions, contraindications, and warnings

* Access pharmacological data and drug properties

* Find medication-related information and dosing guidelines

## Use Cases

* Drug interaction checking

* Medication information lookups

* Pharmacological research

* Clinical decision support for prescribing

## Detailed information

The DrugBank expert integrates with DrugBank's comprehensive pharmaceutical knowledge base, which contains detailed information about drugs, their mechanisms of action, interactions, pharmacokinetics, and pharmacodynamics. When invoked by an AI agent, it can retrieve critical medication information to support safe prescribing practices and clinical decision-making.

# Medical Calculator

Source: https://docs.corti.ai/agentic/experts/medical-calculator

Learn about how the Medical Calculator expert works

Agent framework and tools are currently `under development`. API details subject to change ahead of general release.

The **Medical Calculator** expert enables AI agents to perform medical calculations, including clinical scores, dosing calculations, risk assessments, and other healthcare-related computations.

Medical Calculator ensures accurate clinical calculations, reducing the risk of manual calculation errors in critical healthcare scenarios.

## Capabilities

The Medical Calculator expert can:

* Perform clinical scoring calculations (e.g., CHADS2-VASc, APACHE, etc.)

* Calculate medication dosages based on patient parameters

* Compute risk assessments and probability scores

* Execute various medical formulas and algorithms

## Use Cases

* Clinical risk scoring and assessment

* Medication dosing calculations

* Laboratory value interpretations

* Clinical decision support calculations

## Detailed information

The Medical Calculator expert provides a comprehensive set of medical calculation capabilities, including clinical scores, dosing formulas, risk assessments, and other healthcare computations. It ensures accuracy and consistency in calculations that are critical for patient care, reducing the risk of errors that can occur with manual calculations.

# Medical Coding

Source: https://docs.corti.ai/agentic/experts/medical-coding

Learn about how the Medical Coding expert works

Agent framework and tools are currently `under development`. API details subject to change ahead of general release.

The **Medical Coding** expert provides AI agents with the ability to assign appropriate medical codes (such as ICD-10, CPT, or other coding systems) based on clinical documentation and patient information.

Medical Coding is essential for billing, claims processing, and maintaining accurate medical records that comply with healthcare coding standards.

## Capabilities

The Medical Coding expert can:

* Assign appropriate medical codes from various coding systems

* Analyze clinical documentation to identify codeable conditions

* Suggest codes based on diagnoses, procedures, and clinical findings

* Ensure compliance with coding standards and guidelines

## Use Cases

* Automated medical coding for billing and claims

* Clinical documentation coding assistance

* Code validation and verification

* Revenue cycle management support

## Detailed information

The Medical Coding expert analyzes clinical documentation, patient records, and medical narratives to identify and assign appropriate medical codes. It supports various coding systems including ICD-10, CPT, HCPCS, and others. The expert can help ensure accurate coding, reduce manual coding errors, and improve efficiency in healthcare administrative workflows.

# Posos

Source: https://docs.corti.ai/agentic/experts/posos

Learn about how the Posos expert works

Agent framework and tools are currently `under development`. API details subject to change ahead of general release.

The **Posos** expert provides AI agents with access to Posos's medical knowledge platform, enabling retrieval of clinical information and medical reference data.

Posos offers comprehensive medical reference information that supports clinical decision-making and medical education.

## Capabilities

The Posos expert can:

* Access Posos's medical knowledge database

* Retrieve clinical reference information

* Provide medical content and educational materials

* Support clinical workflows with authoritative medical data

## Use Cases

* Clinical reference lookups

* Medical information retrieval

* Supporting clinical decision-making

* Medical education and training

## Detailed information

The Posos expert integrates with Posos's medical knowledge platform to provide access to their comprehensive database of medical information. When invoked by an AI agent, it can search and retrieve relevant clinical reference data, medical content, and educational materials that support healthcare workflows and clinical decision-making processes.

# PubMed

Source: https://docs.corti.ai/agentic/experts/pubmed

Learn about how the PubMed expert works

Agent framework and tools are currently `under development`. API details subject to change ahead of general release.

The **PubMed** expert provides AI agents with access to PubMed, the comprehensive database of biomedical literature maintained by the National Library of Medicine.

PubMed is the go-to resource for accessing peer-reviewed medical research, clinical studies, and scientific publications.

## Capabilities

The PubMed expert can:

* Search PubMed's database of biomedical literature

* Retrieve research papers, clinical studies, and scientific articles

* Access abstracts and metadata for publications

* Find relevant research based on medical queries

## Use Cases

* Literature reviews and research

* Evidence-based practice support

* Finding relevant clinical studies

* Accessing peer-reviewed medical research

## Detailed information

The PubMed expert integrates with PubMed's extensive database, which contains millions of citations from biomedical literature, life science journals, and online books. When invoked by an AI agent, it can search for relevant research papers, clinical studies, and scientific publications, providing access to the latest evidence-based medical research to support clinical decision-making.

# Questionnaire Interviewing Expert

Source: https://docs.corti.ai/agentic/experts/questionnaire-interviewing

Learn about how the Questionnaire Interviewing expert works

Agent framework and tools are currently `under development`. API details subject to change ahead of general release.

The **Questionnaire Interviewing** expert enables AI agents to conduct structured interviews and questionnaires, guiding conversations to collect specific information in a systematic manner.

This expert is ideal for patient intake, clinical assessments, and any scenario where structured data collection is required.

## Capabilities

The Questionnaire Interviewing expert can:

* Conduct structured interviews following predefined questionnaires

* Guide conversations to collect specific information

* Adapt questioning based on responses

* Ensure comprehensive data collection

## Use Cases

* Patient intake and history taking

* Clinical assessments and screenings

* Research data collection